[AI Sparks] Issue 8: Your First AI Brain Cell (From Scratch)

![[AI Sparks] Issue 8: Your First AI Brain Cell (From Scratch)](/content/images/size/w1200/2025/12/18881_b66a88fda29fe547dfd323335fac0230-2-1.jpg)

Welcome back to AI Sparks!

For the past several issues, we've been working with powerful APIs like GPT and DALL-E, treating them like magical black boxes. We give them an input, and an intelligent output comes back.

But what if I told you that the "magic" inside those boxes is built from a surprisingly simple idea? You've probably heard the term neural network—it's the foundation of all modern generative AI, from ChatGPT to Gemini. While the concept comes up frequently in machine learning classes and online tutorials, many people still find it intimidating or hard to fully grasp.

So, for the next 2-3 issues, we're going on a journey to open that black box. We're going to demystify neural networks by building our own simple version from the ground up. This isn't about becoming a math expert; it's about gaining a deep, intuitive understanding of how an AI actually "thinks."

Today, we start with the single most fundamental component of neural networks: the artificial neuron.

Inside this Issue:

- 📡 AI Radar: From 1958 to Now: Why the Neuron Won?

- 💡 Concept Quick-Dive: The Artificial Neuron

- ⚙️ The Neuron's Toolkit: Translating Concepts to Math

- 🛠️ Hands-on Lab: Build an "Internship Advisor" Neuron

- 🚀 Level Up: When One Neuron Isn't Enough

- 👥 Community Spotlight: Sigmoid vs. ReLU

📡 AI Radar: From 1958 to Now: Why the Neuron Won?

What Happened?

In 1958, a psychologist named Frank Rosenblatt invented the "Perceptron"—the first artificial neuron, designed to model how the brain learns. The New York Times hyped it as the embryo of a computer that would soon "be able to walk, talk, see, write, reproduce itself and be conscious."

It didn't happen. The Perceptron was too simple. It couldn't solve complex problems, leading to decades known as "AI Winters" where funding dried up.

But researchers didn't give up on the neuron architecture. They just needed more of them, and faster computers to run them. Around 2012, the "Deep Learning revolution" began when researchers realized that stacking these simple neurons into massive layers could solve problems previous approaches couldn't touch.

This sparked a decade of explosive growth. We saw machines master visual recognition (AlexNet), conquer complex games like Go (AlphaGo), and finally, master human language with the invention of the Transformer architecture in 2017—the "T" in ChatGPT. Today's massive Large Language Models are essentially just billions of these neurons working in concert.

Why It Matters:

In AI Sparks, for our machine learning series, we are choosing to begin with neurons and neural networks. You might ask: Why not start with simpler statistical models like linear or logistic regression?

Those older models are powerful tools, but they are limited. They excel at simple, predictable relationships—like predicting house prices based on square footage. But they fail miserably at the messy complexity of the real world—like recognizing a face, understanding a joke, or writing a poem.

The artificial neuron is different. It is the only architecture we have found that scales. When we connect enough of them together, they don't just hit a ceiling; they get smarter. Since neurons and neural networks are the fundamental building blocks of every modern AI breakthrough, starting here gives you the solid foundation you need for the rest of your journey into AI.

The "So What" for Students?

The math you are doing by hand today—weights, bias, and activation—is the exact same math powering GPT-4 and Gemini. The only difference is scale. You aren't learning history; you are learning the atomic physics of the current AI revolution.

💡 Concept Quick-Dive: The Artificial Neuron

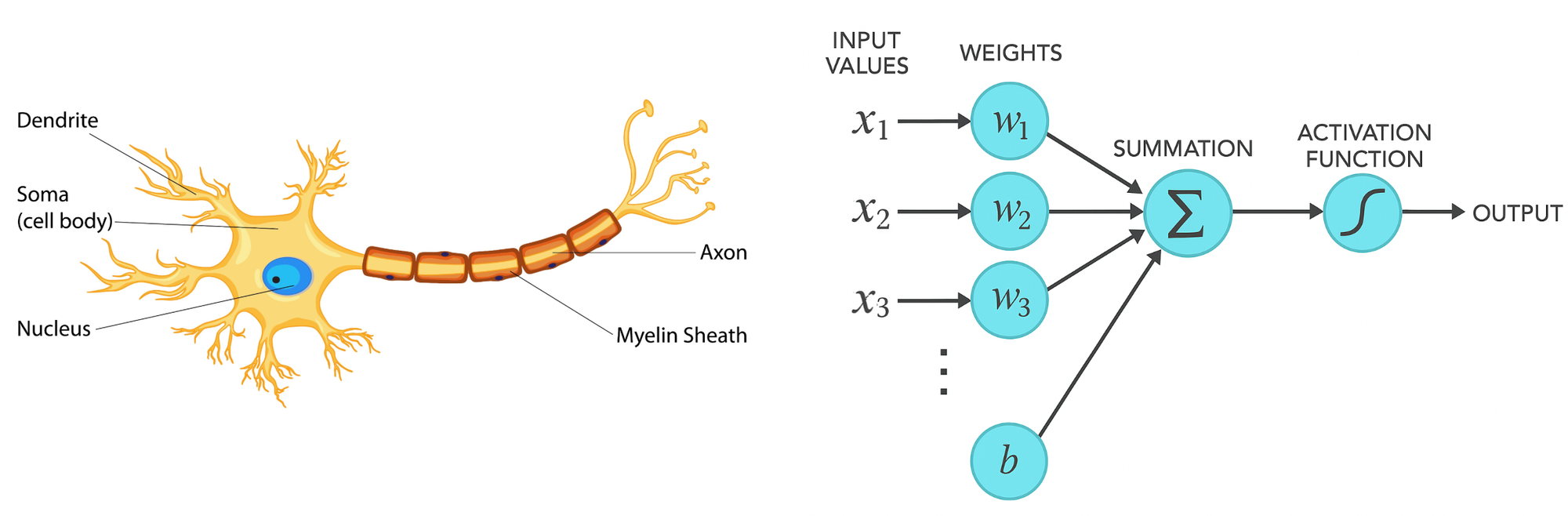

A massive, complex neural network is built from one simple component: the artificial neuron, and it's inspired by the neurons in the human brain.

Let's first look at how a biological neuron works. The process is surprisingly straightforward:

- Receive Signals: The neuron collects electrical signals through its branches, called dendrites.

- Sum them Up: All these incoming signals travel to the cell body, where they are combined or summed up.

- Makes a Decision: If the total signal strength exceeds a certain threshold, the neuron "fires" and sends a single output signal down its axon.

Computer scientists looked at this biological process and created a mathematical model to mimic it. We can map the biology directly to our "artificial neuron":

- Signals → Inputs: Instead of electrical signals, our neuron receives numerical data as inputs.

- Synaptic Strength → Weights: In the brain, some connections are stronger than others. In our model, we assign a "weight" to each input to determine its importance.

- Cell Body → Weighted Sum: We combine all the inputs by multiplying them with their weights and adding them together.

- Firing Threshold → Activation Function: We pass the total sum through a mathematical function to decide the final output (e.g., 0 or 1). This mathematical function is called the activation function.

Let's make this concrete by considering a simple artificial neuron that acts as an Internship Advisor:

- Inputs: It receives two pieces of information (signals): Is the work interesting? and Is the pay good?

- Weighs Inputs: The neuron assigns a "weight" (importance score) to each factor. A picky student might assign high weights to both.

- Combines Inputs: It combines the weighted inputs, also considering the student's initial inclination (their "bias" – are they generally eager or hesitant?).

- Makes a Decision: Based on this combined evaluation, the neuron makes a recommendation – a strong "Accept!", a clear "Reject!", or something in between.

That simple process of weighing evidence and making a decision is the core of how artificial neurons function. Now let's look at the mathematical tools we use to represent this.

⚙️ The Neuron's Toolkit: Translating Concepts to Code

To build our neuron in code, we need to translate these intuitive steps into the mathematical operations.

- Representing Inputs: Computers work with numbers. So, our first step is to represent inputs such as Yes/No numerically. The standard way is simple:

Yes = 1andNo = 0. So, the two inputs become numbers. - Weighing Inputs: We represent the importance of each input using a numerical "weight". A higher weight means that input has more influence on the final decision.

- Combining Inputs (Linear Combination + Bias): The most common way to combine the weighted inputs is using a linear combination:

(input1 * weight1) + (input2 * weight2) + ... + bias. We simply multiply each input by its weight and add them all up to calculate a total score. We also add a bias term, which acts as the neuron's initial inclination or threshold. Think of it this way: the bias is added to the weighted sum before the final decision. A negative bias lowers the total score, making the neuron more "hesitant" – it requires a stronger signal from the weighted inputs to overcome this negativity and produce a high output. Conversely, a positive bias raises the total score, making the neuron more "eager" to produce a high output even with weaker input signals. This linear structure is chosen because it's both intuitive (like a pro/con list) and mathematically simple. - Making the Decision (Activation Function): The total score from the linear combination could be any number, but we often want a clean, final output representing a clear decision (like

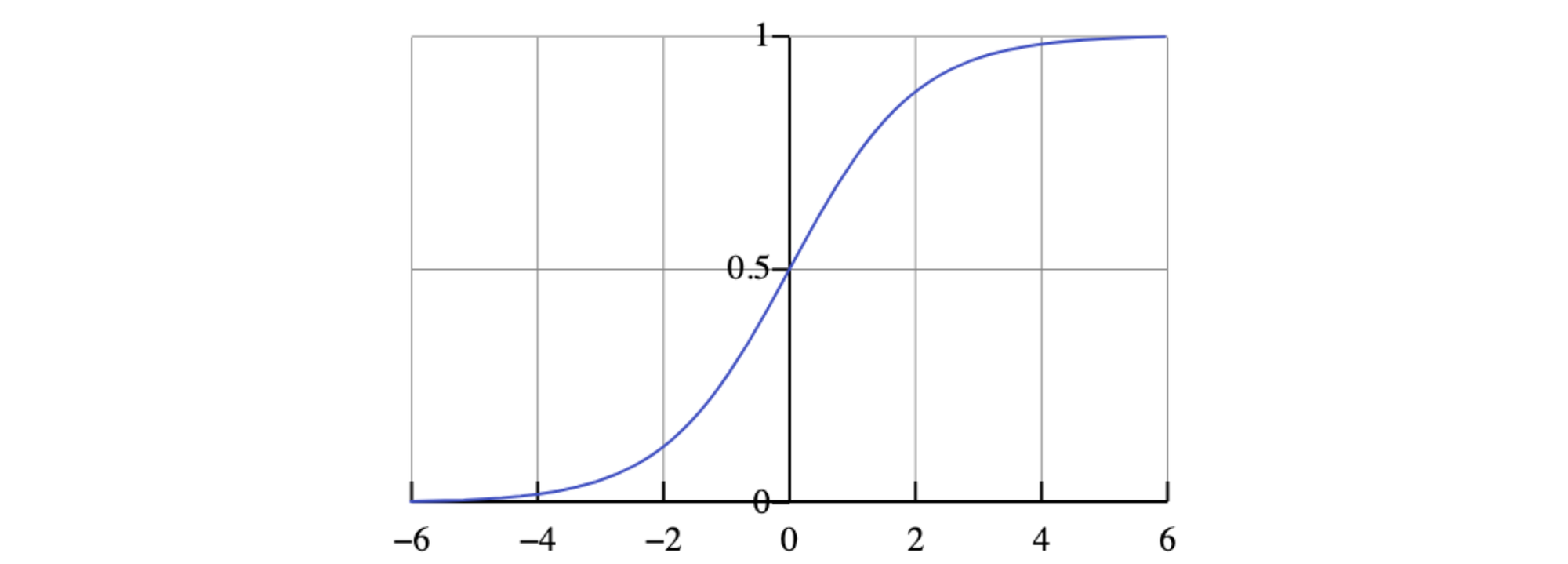

0for "Reject" and1for "Accept"). To achieve this, we use an intermediate step called an activation function. A classic choice is the sigmoid function,1 / (1 + exp(-x)), which takes the raw score and elegantly "squishes" it into a value between 0 and 1. This intermediate value is useful because its smooth curve helps the neuron learn effectively. We can then interpret this 0-to-1 value: a score close to 1 means "Accept," a score close to 0 means "Reject," and a score near 0.5 means the neuron is uncertain. If needed, we could even add a final threshold (e.g., > 0.5 means 1, otherwise 0) to get a strict binary output for Accept/Reject.

A Quick Note on Sigmoid Function:

- As

xgoes to+∞, sigmoid function1 / (1 + exp(-x))approaches 1. - As

xgoes to-∞, sigmoid function1 / (1 + exp(-x))approaches 0. - At

x = 0, sigmoid function1 / (1 + exp(-x)) = 0.5.

With these mathematical tools defined, we're ready to implement our neuron in Python.

🛠️ Hands-on Lab: Build an "Internship Advisor" Neuron

Now, let's turn these concepts into working code. We're going to build the neuron for the "Internship Advisor" we discussed earlier. Our goal is to create an artificial neuron that helps a student make a decision based on two key pieces of information: Is the work interesting? and Is the pay good? We want it to mimic the decision-making of a picky student, following a strict rule: it should only recommend accepting an offer (output 1) if both the work is interesting AND the pay is good. Otherwise, it must recommend rejecting it (output 0).